Want to explore local LLMs but stuck in analysis paralysis?

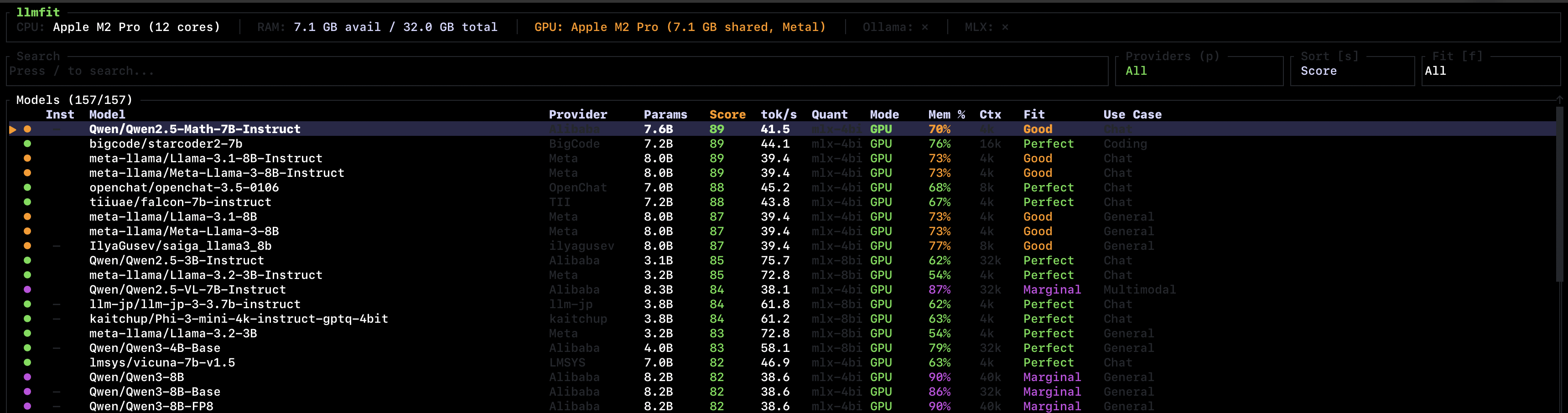

llmfit detects your CPU, RAM, and GPU, then scores 157 models from 30 providers showing which ones fit your system. It shows parameters, score, speed estimates (tokens/s), memory usage, quantization, and a fit rating (Perfect/Good/Marginal).

The score is a weighted composite of quality, speed, fit, and context window - with weights adjusted per use case (coding, reasoning, chat, etc.).

brew install llmfit

llmfitThe interactive TUI lets you search, filter by provider, and sort by score. It also integrates with Ollama - you can see which models are already installed and download new ones directly.